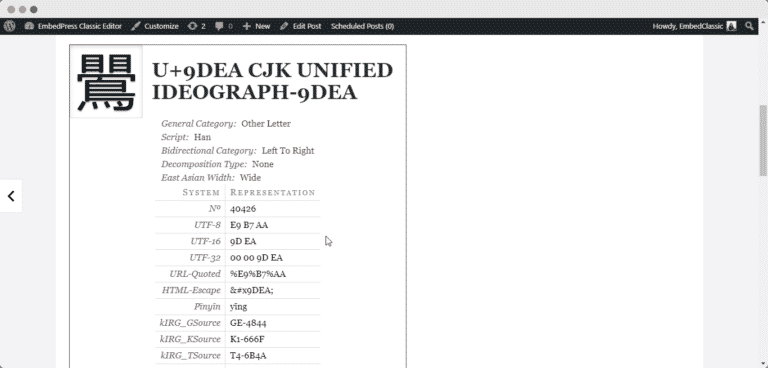

A single code unit may represent a full code point, or part of a code point. In UTF-8 this means 8 bits, in UTF-16 this means 16 bits. Each code point is a number which is given meaning by the Unicode standard.Ī code unit is the unit of storage of a part of an encoded code point. How exactly do each of these concepts differ from each other, and in what circumstances would they not have a one-to-one relationship with each other?Ĭharacter is an overloaded term that can mean many things.Ī code point is the atomic unit of information. So I seek the arcane wisdom of those more learned than I. Most of these definitions possess the quality of sounding very academic and formal, but lack the quality of meaning anything, or else defer the problem of definition to yet another glossary entry or section of the standard. (1) A minimally distinctive unit of writing in the context of a particular writing system. In displaying Unicode character data, one or more glyphs may be selected to depict a particular character. (1) An abstract form that represents one or more glyph images. (3) The basic unit of encoding for the Unicode character encoding.

A unit of information used for the organization, control, or representation of textual data.Ĭharacter. The Unicode Consortium offers a glossary to explain this stuff, but it's full of "definitions" like this:Ībstract Character. Seeing how these terms get used in documents like Matthias Bynens' JavaScript has a unicode problem or Wikipedia's piece on Han unification, I've gathered that these concepts are not the same thing and that it's dangerous to conflate them, but I'm kind of struggling to grasp what each term means. In particular, the distinction between code points, characters, glyphs and graphemes - concepts which in the simplest case, when dealing with English text using ASCII characters, all have a one-to-one relationship with each other - is causing me trouble. Trying to understand the subtleties of modern Unicode is making my head hurt.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed